Multi-Object Search (MOS)¶

The purpose of this example is to introduce the conventions when building a project that involves a more complicated POMDP whose components better be separated for maintenance and readability purposes. We will first introduce this task at relatively high level, then introduce the conventions of the project structure. The conventions can help you organize your project, make the code more readable, and share components between different POMDPs.

Problem overview¶

This task was introduced in Wandzel et al. [1]. We provide a slightly different implementation without considering rooms or topological graph.

The problem is formulated as an Object-Oriented POMDP (OO-POMDP). As in the paper, we implemented this task as an OO-POMDP; pomdp_py provides necessary interfaces to describe an OO-POMDP (see pomdp_py.framework.oopomdp module).

In our implementation, an agent searches for \(n\) objects in a \(W\) by \(L\) gridworld. Both the agent and the objects are represented by single grid cells. The agent can take three categories of actions:

Motion: moves the robot.

Look: projects a sensing region and receives an observation.

Find: marks objects within the sensing region as found.

The sensing region has a fan-shape; Our implementation allows adjusting the angle of the fan as well as sensing range. It is implemented based on a laser scanner model. When the angle is set to 360 degrees, the sensor projects a disk-shape sensing region. Occlusion (i.e. blocking of laser scan beams) is implemented, but there are artifacts due to discretization of the search space.

The transition, observation and reward models are implemented according to the original paper.

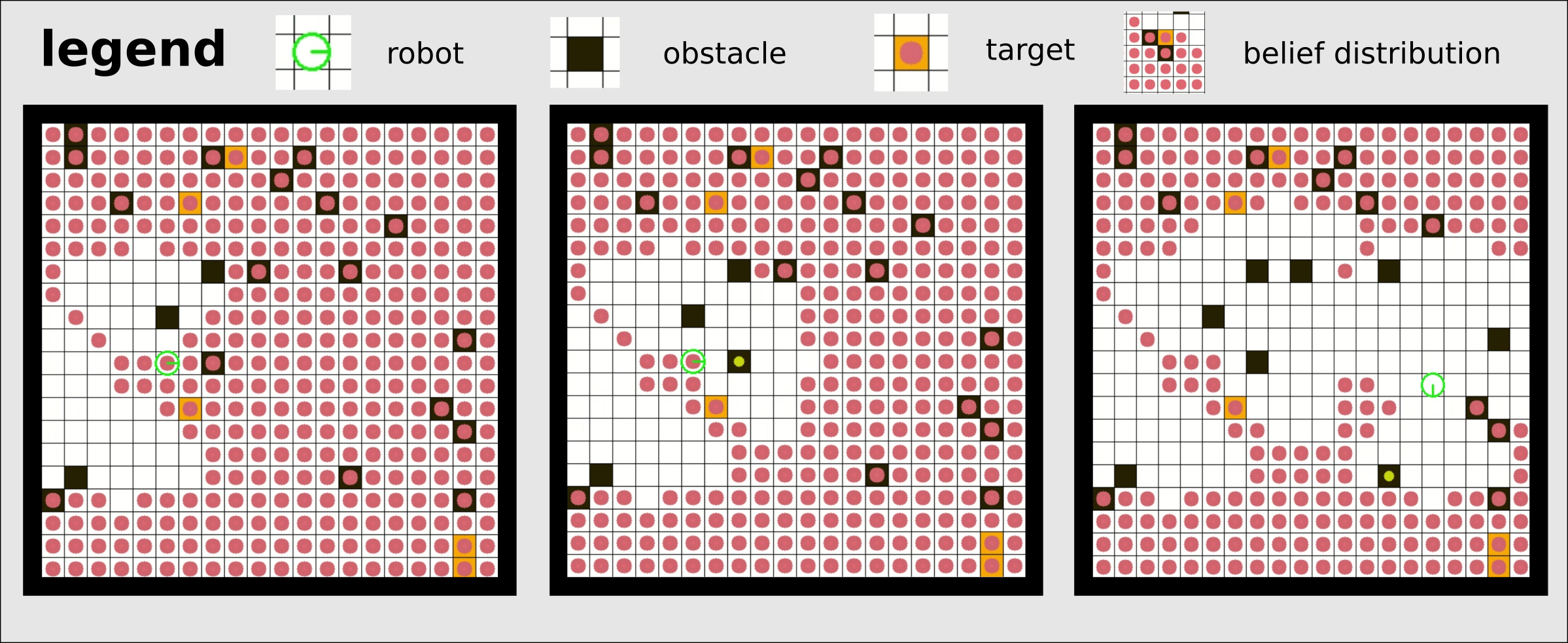

In the figure above, screenshots of frames in a run of the MOS task implemented in pomdp_py are shown. The solver is POUCT. From the first to the second image, the robot takes Look action and projects a fan-shaped sensing region. This leads to belief update (i.e. clearing of the red circles). A perfect observation model is used in this example run. The third image shows a later frame.

Implementing this POMDP: Conventions¶

As described in the Summary section of Tiger, the procedure of using pomdp_py to implement a POMDP problem is:

In a more complicated problem like MOS, it is not good for code maintenance if we squeeze everything into a single giant file. Also, we might want to extend this problem or reuse the models on a different POMDP. Thus, we should be more organized in the code base. Below we provide a recommendation of the package structure to use pomdp_py in this situation. You are free to do whatever you want, but following this convention may save you time.

The package structure (for our MOS implementation) is as follows:

├── domain

│ ├── state.py

│ ├── action.py

│ ├── observation.py

│ └── __init__.py

├── models

│ ├── transition_model.py

│ ├── observation_model.py

│ ├── reward_model.py

│ ├── policy_model.py

│ ├── components

│ │ ├── grid_map.py

│ │ └── sensor.py

│ └── __init__.py

├── agent

│ ├── agent.py

│ ├── belief.py

│ └── __init__.py

├── env

│ ├── env.py

│ ├── visual.py

│ └── __init__.py

├── problem.py

├── example_worlds.py

└── __init__.py

The recommendation is to separate code for domain, models, agent and environment, and have simple generic filenames.

As in the above package tree, files such as state.py or

transition_model.py are self-evident in their role. The

problem.py file is where the

MosOOPOMDP class is defined, and

where the logic of action-feedback loop is implemented (see

Tiger for more detail).

Try it¶

To try out the MOS example problem:

$ python -m pomdp_py.problems.multi_object_search.problem

A gridworld with randomly placed obstacles, targets and robot initial pose is generated; The robot is equipped with either a disk-shape sensor or a laser sensor [source]. A command-line interface is not yet provided; Check interpret,

equip_sensors,

make_laser_sensor,

make_proximity_sensor

as well as previously linked source code

for details about how to create your custom instance of the problem.

Arthur Wandzel, Yoonseon Oh, Michael Fishman, Nishanth Kumar, and Stefanie Tellex. Multi-object search using object-oriented pomdps. In 2019 International Conference on Robotics and Automation (ICRA), 7194–7200. IEEE, 2019.